AI tools powering vertical micro drama in 2026: a data-driven teardown

This is based on what we saw. It's not a ranked list. We looked at the AI video tools that keep showing up in top tall-screen vertical micro drama on YouTube Shorts in 2026. We mapped them to groups based on what they can do. We marked every claim beyond basic 9:16 (the tall shape of a phone screen) output with a [VERIFY] flag. That way readers can check the original sources.

Vertical micro drama on Shorts is one of the fastest-moving content types in 2026. It's become a test bed for AI video work. Our goal here is narrow. We want to show what creators seem to use, what the output looks like, and what no current tool has fixed yet. We're not ranking tools. We're not saying one is "best for" a category. And we're not making price claims without direct source links. For more on the audience and format, see our companion piece on vertical micro drama on YouTube Shorts. That piece covers why this format took off and how channels build episodes.

TL;DR

- • This is based on what we saw in top tall-screen vertical micro drama. It's not a ranked list of "the best" AI video tools.

- • Claims beyond basic 9:16 output are marked [VERIFY]. That way readers can check the tool makers' own docs.

- • No single tool handles keeping a character looking the same over time, shot continuity, and dialogue sync from start to finish. Top channels chain tools together instead of picking one.

Key takeaways

| Tool | What we saw it used for | What it does well | Cost pattern | Known limit |

|---|---|---|---|---|

| Kling (Kuaishou) | Scene generation | Strong native 9:16 output [VERIFY for latest version] | Credits-based, varies | Prompts for Western faces can be tricky [VERIFY] |

| Runway Gen-4 | Using a photo to match the character | Photo-to-video with a reference frame | Price per second | Shot length limits [VERIFY] |

| Luma Dream Machine | Keeping the character the same across keyframes | Start-frame to end-frame [VERIFY] | Credit pools | Motion drifts across shots [VERIFY] |

| Google Veo 3 / 3.1 | Sharp scene output | Multi-second shots with camera control [VERIFY] | Tiered access via Gemini | Access is gated [VERIFY] |

| OpenAI Sora | Scenes that make sense | Scenes that hold together [VERIFY] | Bundled with ChatGPT tiers | Access comes and goes [VERIFY] |

| Pika | Fast trying again | Quick render cycles [VERIFY] | Monthly credits | Shots don't match each other [VERIFY] |

| Minimax Hailuo | Follows the prompt well | Text-to-video [VERIFY] | Credits-based | Few English docs [VERIFY] |

How we looked at this — method

"Which AI tools do top vertical micro drama shows use?" is harder to answer than it looks. A trustworthy piece has to show its work.

We didn't run a lab test. We didn't get special vendor access. We watched public uploads. We wrote down what we saw. We flagged where we weren't sure.

Sample set

Top tall-screen vertical micro drama uploads on YouTube Shorts. We pulled from public ReelShort-style channels, DramaBox mirrors, and indie creator channels. They posted 60-to-90 second dramatic episodes during Q1 2026. We leaned toward channels with more than 50 uploads in the format. That way we wouldn't read too much into a single hit.

How we matched tools to shows

We used three signal types, from most trusted to least. First, creators naming tools in their video descriptions, pinned comments, or behind-the-scenes links. Second, visual tells that trained eyes can sometimes spot. Things like motion patterns, face glitches, or lighting that a given tool tends to make.

Third, public forum talk where a creator said which tools they use. We want to be upfront: spotting tools by eye isn't perfect. Anything we couldn't pin down directly is marked [VERIFY].

What this is not

This isn't a lab test. We're not claiming one tool's output beats another's. We're not quoting prices without a source link. We're not saying "most creators use X." We can't see every team making this stuff. We only see the public tail.

How often we'd recheck

Every three months. The AI world in 2026 moves fast. Any claim more than 90 days old should be treated as maybe-outdated. Where we mark [VERIFY], check the tool maker's own docs before you make a production call. For a wider look across tools, the OutlierKit blog has a bigger survey in best AI tools for video marketing.

What actually matters in AI video for micro drama

Before we look at any one tool, we need to agree on what to look at. These five things stand on their own. A reader can use the same list to check any new tool that drops next month. No need to reread this piece. They aren't weighted. They aren't scored. They don't add up to a ranking.

Vertical (9:16) native output quality

Was the AI trained on tall-screen video? Or does it just make wide video and crop it? Native 9:16 (the tall shape of a phone screen) keeps faces in the middle of the frame. Cropped video pushes faces to the edges.

Character consistency across scenes

How well the AI keeps the same face, clothes, and body shape across different shots. This is the hardest thing to do. It's where [VERIFY] markers show up most. Companies often claim they've fixed it. But the fixes don't always hold up across a 60-second scene.

Shot-to-shot continuity

Keeping the background, lighting, and camera logic the same when you film the same spot from different angles. Micro drama cuts between shots a lot. If a tool changes the wall color or window spot between shots, you'll have to fix it by hand.

Cost per finished second

Not the sticker price. The real cost, after you throw away the bad tries. A cheap tool with a high fail rate can cost more per usable second than a pricey tool that works on the first try. Treat the listed price as the best case, not the real case.

Reference image and keyframe conditioning

Does the tool let you give it a photo? Giving the AI a photo so the character matches that look is called reference image conditioning. A keyframe is a set-up image for the start or end of a shot. If you can lock a reference photo into the first frame of every shot, you stop a lot of drift problems.

Across all five, the clearest finding is this. Giving the AI a photo so the character matches that look (and using start/end keyframes) drives most of what the eye sees as "quality."

A tool with average raw output but strong reference photo support makes more usable footage than a sharper tool with weaker reference support. Why? Because scenes fail when the character drifts or the background drifts. Not from frame-level issues.

Capability matrix (based on what we saw, not ranked)

The table below maps seven tools against the five things above. Cells use three labels. "Observed" means we saw it in public samples or creator docs. "Not observed" means we did not see it. "[VERIFY]" means the claim exists but we couldn't confirm it ourselves. The table skips "best" and "worst" words on purpose.

| Tool | 9:16 native | Character consistency | Shot continuity | Reference / keyframe | Cost pattern |

|---|---|---|---|---|---|

| Kling (Kuaishou) | Observed | [VERIFY] | [VERIFY] | Observed | Credits-based, variable |

| Runway Gen-4 | Observed | Observed via reference | [VERIFY] | Observed | Per-second pricing |

| Luma Dream Machine | Observed | [VERIFY] | [VERIFY] | Observed (keyframes) | Credit pools |

| Google Veo 3 / 3.1 | [VERIFY] | [VERIFY] | [VERIFY] | [VERIFY] | Tiered access via Gemini |

| OpenAI Sora | Observed | [VERIFY] | [VERIFY] | [VERIFY] | Bundled with ChatGPT tiers |

| Pika | Observed | Not observed at scene scale | [VERIFY] | Observed | Monthly credits |

| Minimax Hailuo | Observed | [VERIFY] | [VERIFY] | [VERIFY] | Credits-based |

Cell labels: "Observed" = we saw it in public samples or creator docs. "Not observed" = we did not see it in our sample. "[VERIFY]" = the claim exists but we couldn't confirm it against the original sources at the time we wrote this.

The tools showing up in top micro drama

Each sub-section says what we saw in public uploads. It also covers how creators describe using each tool. Sub-sections are in alphabetical order within groups, not ranked. Where a company claims something but we couldn't confirm it in a real-world sample, we mark it [VERIFY].

Kling (Kuaishou)

Kling shows up a lot in public creator breakdowns of tall-screen vertical micro drama. It's common on channels that post daily. Output in 9:16 usually holds together across a single shot. Motion looks real.

The most common creator gripe: prompts are fussy when you render Western faces. Eye color and hair detail can take more tries than prompts based on East Asian faces [VERIFY].

Cost is credits-based. It varies by version and region. Versions matter here. Check any claim tied to a specific version against the current Kling release notes before you commit.

Runway Gen-4

Runway Gen-4 shows up in workflows where a reference photo is the main need. Creators describe feeding in a locked character portrait as the first frame. Then they let Gen-4 render the motion. It's one of the few steady ways to keep the face the same across an episode.

Pricing is per second. That makes costs per finished shot easier to plan than credit systems. Shot length limits are [VERIFY] and have shifted across releases. Check the current product page before you script long takes.

Luma Dream Machine

Luma shows up in workflows built on start-and-end keyframes. A keyframe is a set-up image for the start or end of a shot. A creator hands in both. The model fills in the motion between them. That's a neat trick for micro drama. You can plan a scene beat with still art first, then add motion.

Output looks real for single shots. Motion still drifts across shot boundaries when you render the same character a lot [VERIFY]. Pricing is credit-pool based. Tiers change now and then.

Google Veo 3 / 3.1

Veo 3 and 3.1 come up in micro drama talk for sharp scene output and longer per-shot length [VERIFY]. Access is gated by Gemini tiers. It's shifted across Veo releases. That makes Veo less predictable in public creator setups than Kling or Runway.

Camera control is one of the main reasons creators mention Veo [VERIFY]. But the feature set has changed enough across versions that any claim should be rechecked against the current Veo docs.

The remaining three — Pika, Minimax Hailuo, and OpenAI Sora — show up less centrally in public top-performing micro drama breakdowns but appear in specific roles.

Pika

Pika is cited for iteration speed — fast cycle times that let a creator reroll a shot several times within a short block of production time [VERIFY]. Creators describe using it for establishing shots and short B-roll rather than character dialogue sequences. Shot-to-shot character variation across multiple Pika generations is the most commonly flagged limitation in creator commentary [VERIFY].

Minimax Hailuo

Hailuo appears in East-Asia-origin micro drama pipelines and is cited for prompt adherence in text-to-video generation [VERIFY]. English-language documentation is less thorough than the other providers, which is a practical barrier for Western production teams relying on detailed parameter documentation before committing to a stack [VERIFY].

OpenAI Sora

Sora appears in micro drama commentary around narrative coherence at the scene level [VERIFY] — the sense that a scene "understands" itself across its frames rather than drifting.

Availability has been inconsistent through the ChatGPT tier bundling [VERIFY], which has made Sora a less predictable anchor for daily production pipelines than tools with steadier access.

What no AI tool solves yet

Every teardown that only describes what works is half a picture. These five limitations recur across every tool in the stack in 2026, and no current provider claims a complete solution. Naming them explicitly is more useful than implying they are solvable with better prompting.

1. Multi-minute character consistency.

Holding a single face, wardrobe, and proportions across a two-to-three-minute sequence composed of multiple independent generations still requires manual reference locking and reroll budget. No tool currently ships this end-to-end at production quality.

2. Hands.

Close-up hand motion — picking up an object, gesturing in conversation — remains the most visually obvious tell in AI micro drama. Creators work around this by framing shots above the hands or cutting away at the moment hands enter the frame.

3. Long-form continuity.

Background consistency across reverse-angle cuts of the same location — same furniture positions, same lighting direction — is not solved by any tool at a production-grade reliability. Scripts often avoid the problem by staying in a single angle or cutting to a new location instead of the reverse.

4. Audio-visual sync for dialogue.

End-to-end dialogue with convincing lip sync remains inconsistent [VERIFY]. Top channels overwhelmingly split voice and video into two steps and sync in post rather than rely on a single-pass dialogue render.

5. Brand-specific character design.

If a channel has a recurring original character that needs to look the same every episode for months, current tools require a disciplined reference image library plus human QA. No provider ships a persistent identity system that fully removes the human-in-the-loop requirement.

How top micro drama channels combine these tools

Three workflow patterns recur across public creator breakdowns. They are listed in arbitrary order, not ranked. Each pattern trades something for something else, and channels often switch patterns depending on the episode type.

Workflow pattern: multi-tool chain

Observed chain in public quality-priority breakdowns: scene generation feeds reference-conditioned renders, which feed keyframe continuity passes, which assemble in a conventional editor. Order varies by shot.

Pattern A: single-tool all-in (speed priority)

One model for every shot, minimal reference lockstep, rerolls absorbed into the schedule. Used by channels publishing daily or near-daily to minimize tooling overhead. The tradeoff is visible identity drift across episodes, which these channels often accept as part of the format aesthetic.

Pattern B: multi-tool chain (quality priority)

A still-image model produces a locked reference portrait, the video model conditions on that portrait frame to render motion, a separate pass handles any long-duration shot where keyframe interpolation helps continuity, and the finished cuts assemble in a conventional editor. This is the pattern drawn in the diagram above and is commonly described in the quality-priority end of the market. It costs more per finished second but halves the reroll rate on dialogue scenes.

Pattern C: AI + live-action hybrid

Dialogue close-ups are shot with real actors against a plain background, and AI handles establishing shots, environment transitions, and any scene that would be expensive to film live. This pattern sidesteps the hands and lip sync problems by letting live-action carry the moments that AI currently fails on, while AI carries the moments that are expensive to produce live.

For a broader survey of how these tools are applied beyond micro drama, including narrative film and longer formats, the OutlierKit blog has a wider piece on best AI tools for movie creation, and a companion piece on best AI tools for YouTube video creation.

Frequently asked questions

Tool selection

Which AI video tool should I start with for vertical micro drama?

There's no single answer for every show. Tools group by what they do well. Kling and Runway Gen-4 show up most in public creator breakdowns of tall-screen vertical micro drama. Luma comes up a lot for keyframe work. Veo, Sora, Pika, and Hailuo show up for smaller jobs. Start with the tool your scene needs most. A dialogue scene with the same character needs strong reference image work. An establishing shot needs clean raw output. Run the same short prompt through two or three tools for a week. Track your own reroll rate (running the AI again when the first result isn't good) before you pick a set of tools.

Is any of these tools actually native 9:16?

We've seen native 9:16 output from Kling, Runway Gen-4, Luma, Sora, Pika, and Hailuo in public samples. We can't tell if the AI was actually trained on tall-screen data or if the product just crops to that shape. We'd need direct docs from each company to know. For Veo, native tall-screen support is marked [VERIFY]. The steps have changed across versions. If tall-screen quality matters for your scene, test the same prompt in both shapes. Then compare how faces and hands sit in the frame.

How much does character consistency actually work in 2026?

It works for single shots and short scenes when you lock a reference photo into the first frame. It starts to drift across many shots. It breaks down when you need the same character in very different lighting. Companies have shared ways of keeping a character looking the same over time. These are marked [VERIFY] throughout this piece. Public demos don't always match what you see in a real 60-second scene. Write your story around what the tools can do now, not what's coming later.

Are there open-source tools that show up in micro drama production?

Open-source video tools show up in hobby micro drama. But they rarely show up in top vertical uploads on YouTube Shorts. The gap isn't just quality. It's how the work gets done. Teams that ship daily episodes need hosted APIs. Those give them steady output day after day. This could change fast. It's worth checking again every few months.

Workflow

What does a typical multi-tool workflow look like?

Here's a common pattern from public creator breakdowns. First, make a reference portrait in a still-image tool. Then use that photo as the first frame in a video tool like Runway Gen-4 or Luma. Next, render the motion in whichever scene tool gave the cleanest first try for that shot. Last, cut it together in a normal editor like CapCut or Premiere. Teams use this chain because no single tool handles all three jobs well: reference photos, motion, and editing.

How do teams handle dialogue and lip sync?

Top vertical micro drama teams handle dialogue after the video is made. The video tool renders the acting. A separate audio step adds the voice. Lip sync from all-in-one tools is still shaky [VERIFY]. So a common trick is to render with the mouth closed or unclear. Then add voice later. Or use a separate lip-sync tool on top of the base shot. Expect this to change fast. Check each tool's notes when you plan a script with lots of dialogue.

How often should I re-evaluate the stack?

Every three months is a good pace. The AI world in 2026 moves fast. A gap you see in January can close by April. A cheap tool one quarter can get pricey the next when credit costs shift. Keep a small test set of three to five scenes that match your usual work. Run them through each tool every 90 days.

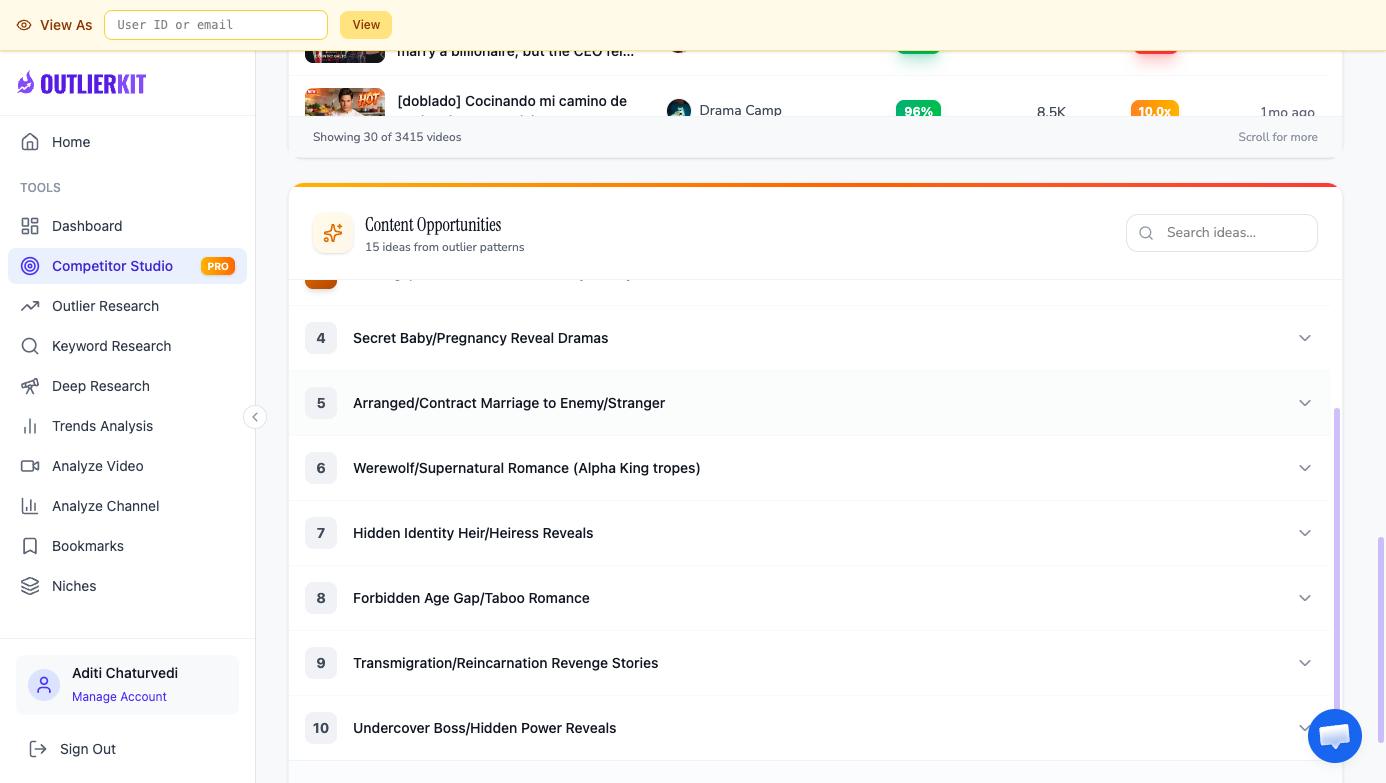

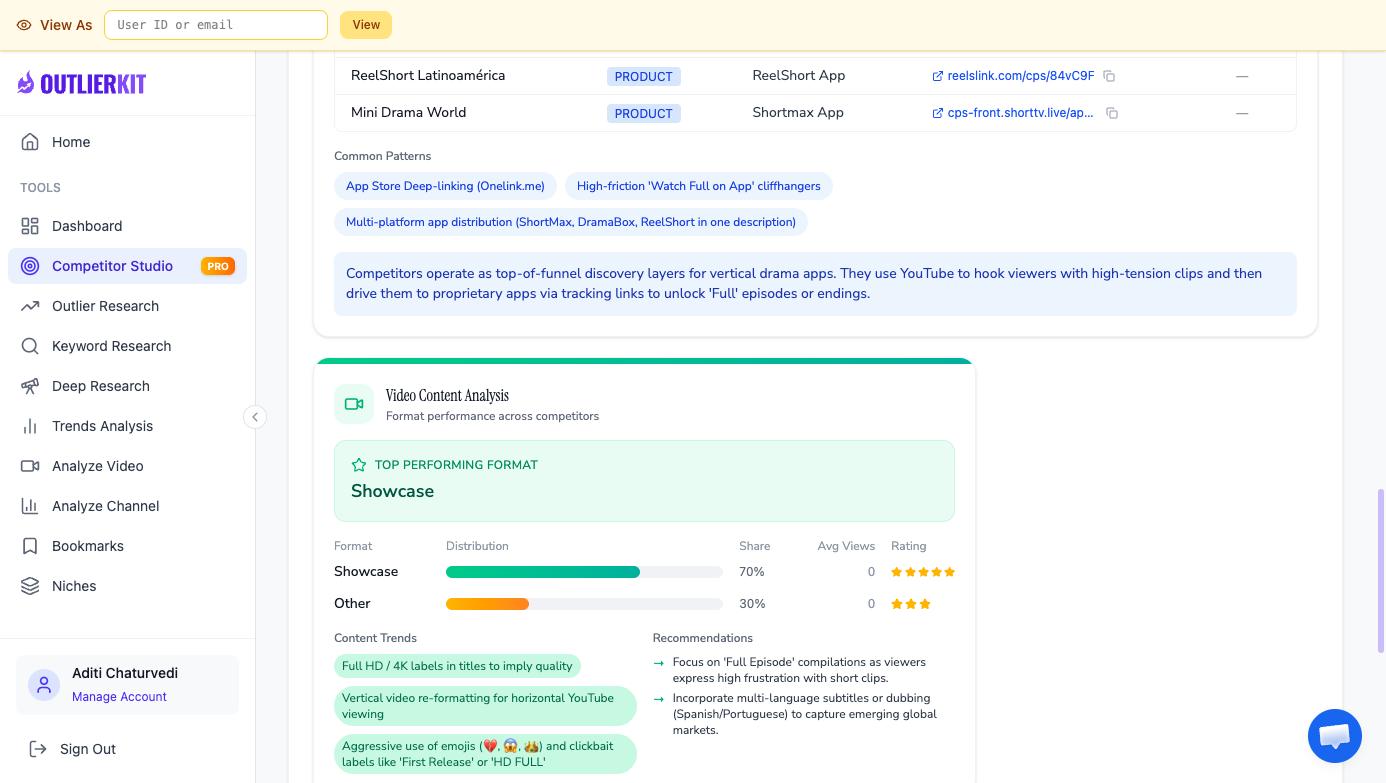

Where does OutlierKit fit into an AI micro drama workflow?

OutlierKit doesn't make video. You use it before the video work starts. It helps you find which tall-screen vertical micro drama formats and hooks are beating their own channels by 3x to 10x. That way you spend AI time on scenes the audience already wants. The pairing: use OutlierKit and the competitor studio tool to pick the script. Then use the AI set of tools to make it.

Finding what is working in your sub-niche

Picking tools is the second decision. The first is picking which micro drama hook and format to produce at all — because in a category this young, the difference between a script the audience has signalled demand for and a script that has not yet landed anywhere is measured in orders of magnitude of views. A full AI production pipeline spent on the wrong script is more expensive than any tool decision.

OutlierKit's competitor studio tool scans vertical micro drama channels for videos outperforming their own channel medians by 3x to 10x, which surfaces which specific hooks, episode structures, and thumbnail patterns are breaking out right now. Pair that with the vertical micro drama pillar for audience and format context, and with YouTube drama trends for 2026 for broader genre signals.

To see what niche-wide outlier scanning looks like in practice, check the plans on the OutlierKit pricing page, or jump back to the competitor studio overview to see the full feature surface.

Related guides

Ready to grow your YouTube channel?

OutlierKit helps you find winning content strategies with competitor analysis and keyword research.

Try OutlierKit Free